When I first began managing Linux servers, one of the most perplexing metrics I encountered was the load average.

At first glance, the numbers seemed abstract and elusive—what did a load average of 2.5 or 5.0 actually mean for system performance?

I vividly remember the first time I was faced with a server running unusually slow.

The users were complaining about lag, and applications were struggling to keep up.

My immediate reaction was to dive into system monitoring, and that’s when I encountered the load average for the first time.

Checking the output of the uptime command, I saw something like:

12:34:56 up 2 days, 5:17, 3 users, load average: 24.25, 33.80, 43.60

The numbers seemed high compared to what I was used to, and I quickly realized that this was a key indicator of system performance.

With a load average consistently above the number of Vcpus, it was clear that the system was under significant stress.

Today, we will explore the concept of load average, learn how to interpret its values, and understand how to monitor high load averages on Linux systems.

See also: Mastering the Linux Command Line — Your Complete Free Training Guide

Table of Contents

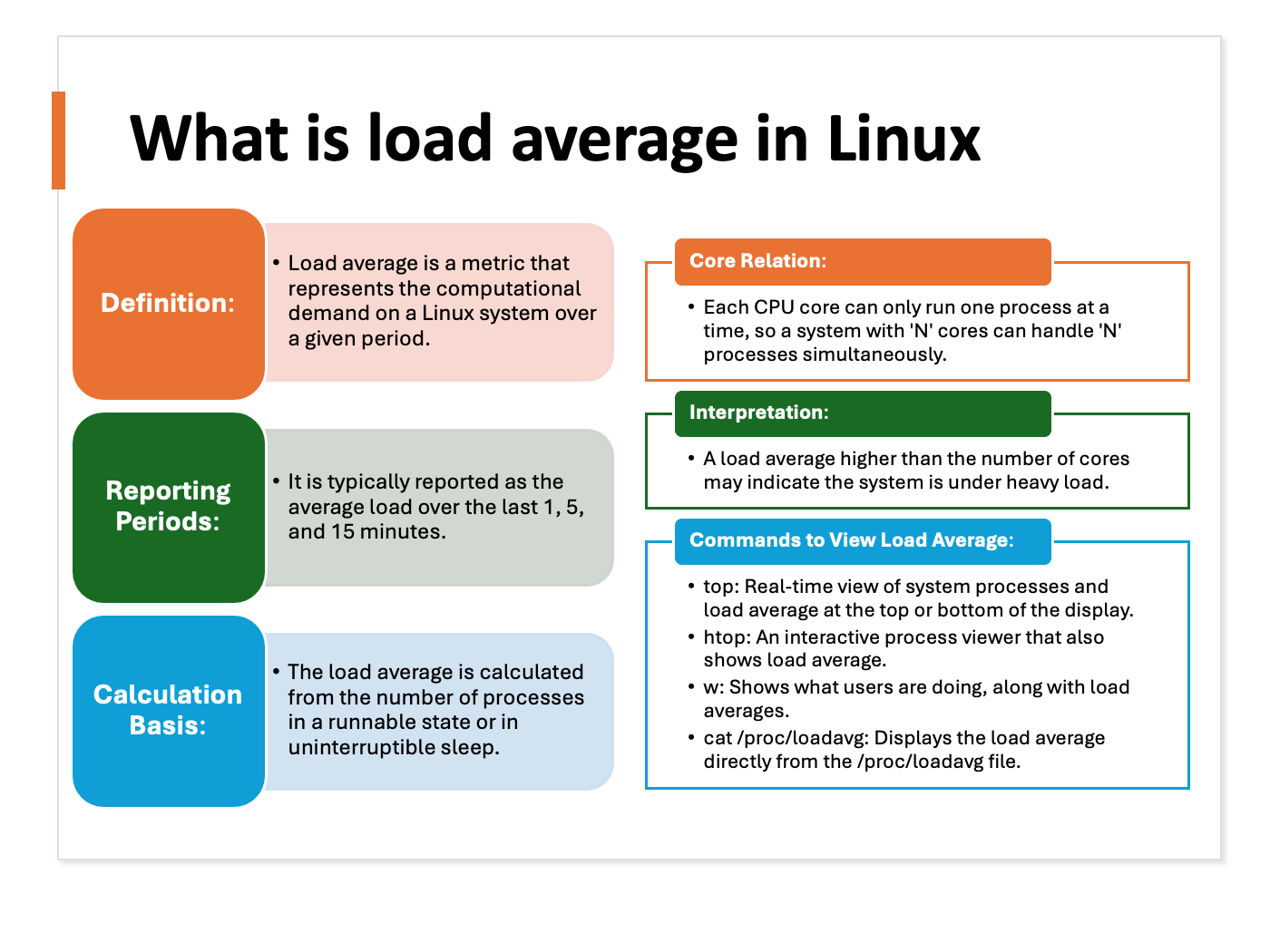

Understanding Load Average

Load average represents the average number of processes that are either in a runnable or uninterruptible state over a given period.

This metric is calculated over three intervals: 1 minute, 5 minutes, and 15 minutes.

- 1-Minute Load Average: Reflects the system load in the most recent minute.

- 5-Minute Load Average: Provides an average over the past five minutes.

- 15-Minute Load Average: Shows the average load over the last fifteen minutes.

These values give a snapshot of system demand and can indicate whether the system is under heavy load or operating normally.

System load is a measure of the amount of work (meaning the number of currently active and queued processes) being performed by the CPU as a percentage of total capacity.

Load averages that represent system activity over time, because they present a much more accurate picture of the state of our system, are a better way to represent this metric.

Example of Load Average In Linux

For example, if the uptime command returns:

12:34:56 up 2 days, 5:17, 3 users, load average: 0.75, 0.80, 0.85

- 0.75: Average load over the last 1 minute.

- 0.80: Average load over the last 5 minutes.

- 0.85: Average load over the last 15 minutes.

In this scenario, the system’s load average has been relatively stable.

Here are more examples.

A load average of 1.27 on a system with one CPU would mean that, on average, the CPU is working to capacity and another 27% of processes are waiting for their turn with the CPU.

By contrast, a load average of 0.27 on a system with one CPU would mean that, on average, the CPU was unused for 73% of the time.

On a four-core system, we might see load averages in the range of 2.1, which would be just over 50% of capacity (or unused for around 52% of the time).

So the load average is related to the number of CPUs on our Linux system.

For example, load average 20 with 20 CPU is totally different from load average of 20 with 10 CPU.

The load on a system is the total amount of running and blocking process.

For example, if two processes were running and five were blocked to run, the system’s load would be seven.

The load average is the amount of load over a given amount of time.

Typically, the load average is taken over 1 minute, 5 minutes, and 15 minutes. This enables you to see how the load changes over time.

We can use the following command to get the running process and blocking process. It should be the same as the load average.

ps -eo s,user,cmd | grep ^[RD] |wc -l

Check System Load average in Linux

We have 4 ways to check the load average on Linux.

- cat /proc/loadavg

- uptime

- w

- top

The procedure to check load average in Linux is as follows:

- Open your Terminal.

- Type in top or cat /proc/loadavg and hit Enter.

- Under the “System Load” column, you should see a number that is constantly increasing. This number represents the system load average, which is a measure of how much work the system is doing at any given time.

- If the system load average is high, it means that your computer is under a lot of stress and is struggling to keep up with the demand. This can cause problems such as high CPU usage and slow performance.

Here is one CPU high load example on our production system.

The load went over to 170 for one server. The total vCPUs for this server is 64.

After checking, we found that many processes were blocked because of network loss to nfs storage.

The system load average number is the same as the number of blocked processes. We fixed the issue after we reboot the server in the end.

complete guide to fix high load average in Linux

If you’re experiencing a high load average in Linux, there are a few things you can do to try and resolve the issue. The following is a complete guide to fixing high load averages in Linux:

- Identify the causes of the high load average.

- Resolve any resource bottlenecks.

- Review and optimize system configuration files.

- Investigate and resolve any software issues.

Identifying the causes of a high load average is the first and most important step in fixing the issue.

As mentioned above, there are many possible causes of a high load average, so it’s important to narrow down the possibilities before taking any further action.

The first thing to do is to check the process list and see which process is using the most CPU.

You can do this by typing the following command into the terminal: top

The “top” command will show you a list of all of the processes that are currently running on your computer, and it will show how much CPU each process is using.

If you see a process that is using a lot of CPU, you can stop it by typing the following command: kill <pid>

Where “<pid>” is the process ID for the process that you want to stop.

We can also use the “netstat” command to check network statistics.

The output of the “netstat” command will show us a list of active network connections.

If there are too many connections established, this could be causing the high load average issue.

We can also use the “ps” command to check the status of processes. The output of the “ps” command will show us a list of all processes running on the system.

By using these commands, we can narrow down the root cause of the issue and fix it.

Once you’ve identified the possible causes of the high load average, you can begin working through each potential solution until the issue is resolved.

Remember, there is no one-size-fits-all solution to fixing a high load average, so you may need to try multiple solutions before finding the one that works for your particular situation.

In some cases, a high load average may be caused by software issues.

If this is the case, you’ll need to investigate the issue and determine what needs to be done to resolve it.

This can often be a more difficult task than resolving resource bottlenecks or optimizing system configuration files, but it’s important to identify and fix any software issues that may be causing problems.

Related Post: