In Kubernetes, when configuring a Service to expose your applications, you’ll encounter three key port concepts: port, targetPort, and NodePort.

Understanding the distinctions and relationships between these ports is crucial for effectively managing access to your applications running within Pods.

Table of Contents

port

- Definition: The port field in a Service specification defines the port on which the Service is exposed inside the Kubernetes cluster.

- Usage: Other components within the cluster communicate with the Service through this port. It acts as a stable internal endpoint for other Pods to access the services the Service provides.

- Example: If a Service has a port set to 80, it means that within the cluster, this Service can be accessed at this port.

targetPort

- Definition: The targetPort is the specific port on the Pod(s) that the Service forwards traffic to. It’s where your application inside the Pod is listening for incoming requests.

- Usage: This allows the Service to direct incoming traffic to the correct port on the Pod, regardless of other ports that might be open on the Pod. The targetPort can be defined as a number or as a name referring to a port name defined in the Pod spec.

- Example: If your Pod’s application listens on port 8080, the targetPort in the Service that routes traffic to this Pod should be set to 8080.

NodePort

- Definition: When you create a Service of type NodePort, Kubernetes allocates a port from a predefined range (default: 30000-32767). This NodePort is opened on every node in the cluster.

- Usage: The NodePort allows external traffic to reach the Service. External clients can access the Service by hitting any of the cluster nodes’ IP addresses at the NodePort. Kubernetes automatically routes this traffic to the Service port, and then to the targetPort on the Pod.

- Example: If a Service is assigned a NodePort of 32100, any external traffic sent to any node’s IP address at port 32100 is forwarded to the Service, and then to the appropriate Pod as per the Service’s targetPort.

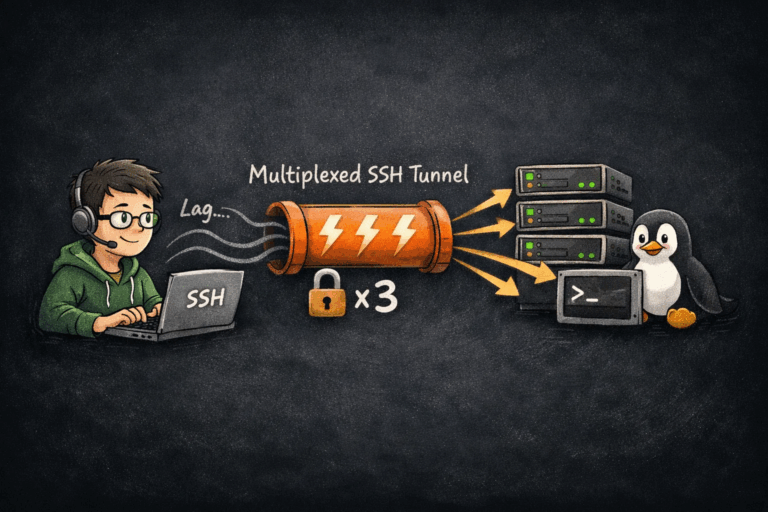

Relationship and Workflow

Here’s how these ports work together in a typical NodePort Service setup:

- External Traffic: Begins by targeting a node’s IP address at the NodePort.

- NodePort: Receives the external traffic on the node and forwards it to the Service’s port.

- Service Port: The Service, listening on this port, routes the traffic to one of its backend Pods.

- targetPort: The Service forwards the traffic to this port on the Pod, where the application is listening.

Diagrammatic Representation

[External Traffic] --> [Node IP:NodePort] --> [Service:Port] --> [Pod:targetPort]

Pro tips for port, targetPort, and NodePort

When configuring ports in Kubernetes Services, adhering to best practices can enhance the security, maintainability, and performance of your applications. Here are some best practices for dealing with port, targetPort, and NodePort:

Use Descriptive Port Names

- When defining multiple ports in your Pod specifications, use descriptive names for each port. This improves readability and helps clarify the purpose of each port when referenced in a Service.

ports:

- name: http

Port: 8080

- name: metrics

Port: 9090

Consistent Port Usage

- Maintain consistency in port usage across your application deployments. If a service typically listens on a certain port, try to standardize that across all instances of that service type to reduce configuration complexity and potential errors.

Leverage Default Ports When Appropriate

- For common protocols like HTTP (80) and HTTPS (443), use the standard ports when exposing Services internally, as this adheres to established conventions and can simplify Service interaction.

Secure NodePort Access

- NodePort services expose applications on high ports (30000-32767) on all nodes, which can pose a security risk. Limit access to these ports using network policies or firewall rules to only allow legitimate traffic.

- Consider using NodePort services only when necessary and prefer LoadBalancer or Ingress for external access, as they offer more control and security features.

Minimize Use of NodePort

- NodePort should be used sparingly, primarily in development environments or when there’s no LoadBalancer available. In production, prefer using Ingress controllers or cloud provider LoadBalancer services for external traffic, as they provide more flexibility and features.

Optimize TargetPort Configuration

- The targetPort can be different from the Service’s port, allowing you to run container applications on their native ports while exposing them on more common ports within the cluster. Use this feature to avoid conflicts and to adhere to internal port allocation policies.

Plan for High Availability and Scalability

- When setting up LoadBalancer or NodePort Services, ensure your underlying application Pods are designed for high availability and scalability. Use ReplicaSets or Deployments to manage Pod replicas, ensuring that the Service can effectively distribute traffic across multiple instances.

Monitor and Log Access

- Implement monitoring and logging for access to Services, especially those exposed externally via NodePort or LoadBalancer. This helps in identifying potential security breaches and understanding traffic patterns for capacity planning.

Review and Update Regularly

- Regularly review your port configurations and Service definitions as part of your cluster maintenance. Update them as needed to adapt to changing application requirements and to incorporate improvements in Kubernetes networking features.

Use Network Policies for Enhanced Security

- Utilize Kubernetes Network Policies to define fine-grained access controls to Services and Pods, limiting communication based on the principle of least privilege.

By following these best practices, you can create a Kubernetes networking setup that is secure, efficient, and aligned with the operational requirements of your applications.

Understanding the distinction and interplay between port, targetPort, and NodePort is essential for configuring Services in Kubernetes, ensuring that your applications are accessible both within the cluster and from the external world as needed.